The Final Grift

I'm generally what most AI Boosters would call a "Doomer": I don't see the present value in LLMs with how they're trained, implemented, and utilized. I believe they're dumbing down our already idiotic populace, and reports are starting to support my position. They output slop, flooding an internet already hobbled by advertising and algorithms with even more sewage - both graphical and literary. They're positioned almost exclusively to displace higher paying jobs that require expertise and experience - and the last remaining careers able to enter the vaunted "Middle Class" in Western Society. Their biggest boosters champion these prediction machines as revolutionary to civilization itself, the key to unlocking infinite productivity and resource exploitation while solving all of our ills, rocketing humanity into a Star Trek-esque future of peace, harmony, and meritocracy; in reality, they openly court fascist regimes for taxpayer funding and business-friendly laws to protect their wealth, rather than ensure it's appropriately shared with the populace they proclaim to benefit. This doesn't even get into the environmental problems of modern AI, exacerbating and accelerating an ongoing climate crisis as desperately needed nuclear, fresh water, and renewable energy sources are diverted to speculative data center builds.

So yes, I'm a bit of a doomer on AI as it presently stands. That's not to say I'm a doomer on LLMs themselves, however! I can see their value as accessibility aides, for instance, providing a more comprehensive user experience for speech-to-text interactivity or as personalized assistance that can help you better juggle schedules, tasks, or chores. I absolutely think they have value in helping build a more "programmer" mindset in the average person, letting them speak a desired solution to a problem in their home and trusting the LLM to put together a solution in simple code - "Turn on the lights in the living room when I get home from work", for instance, could have a connected LLM spit out appropriate code for your home assistant, and then test its results with you before implementation. Since neither of those markets are particularly profitable, we don't really see exploitation of either outside of insanely-priced, cloud-tethered subscription services that mainly serve to remove your sovereignty and hoard your data for corporate surveillance.

AI has its problems, but also has some promise. What I'd like to tackle in this post is what I believe to be the real, final grift by these AI companies training what are called "foundational models": permanently exempting corporations from Copyright Laws.

Laws for Thee, not for Me

If you've been reading technology-focused news of late, you've likely seen a smattering of articles about OpenAI's defense against copyright infringement claims: they totally stole that content, but they should be allowed to do so because winning is a matter of National Security.

That's right. OpenAI (and now Google) claim it's a matter of National Security that they be allowed to hoover up whatever they want for training purposes, and never be held accountable for their actions. Specifically, they cite China's long-standing violation of global copyright and IP laws as raison d'être for doing the same thing in the United States; if China can do it, why can't we?

To be fair, they have a valid point. The United States (and the West in general) have long refused to address China's flagrant disregard for intellectual property laws because it makes them money at the end of the day. It's basically just a "Gentlemen's Agreement" of sorts that China can do as it pleases domestically, so long as they don't export their infringement efforts abroad, and if that means markets of bootleg media for pennies on the dollar in exchange for cheap labor to manufacturer the Western merchandise for said media, then companies will look the other way.

Getting back into the technicalities of their defense however, AI companies are making a very specific claim that harks back to the days of hoisting the Jolly Roger in the mid-2000s: their theft of IP and violation of copyright protections is permissible under Fair Use. As a very quick primer for those who don't want to click through to Wikipedia, Fair Use is the concept that a given entity has rights to use copyrighted material in certain ways, without prior permission of the copyright holder. Its use is more common than most people realize: media servers like Plex or Jellyfin couldn't (legally) exist without Fair Use, as ripping CDs or other media for personal use (backups, local streaming, transforming content to play on different mediums you also own, etc) is not explicitly allowed by Copyright Holders; they'd much rather you rent the same media multiple times for multiple devices indefinitely, instead of purchasing a 4K UHD disc and ripping it to Plex for transcoding as needed. Multiple lawsuits have been battled out over the limits of Fair Use before, from CD ripping, to VHS recording, to software APIs and beyond, all of which generally held that consumers who have purchased content are allowed to transform it for personal use, provided they do not share their copies with others.

Fair Use also governs "transformative works", and this is where AI companies are specifically planting their legal defense. Again, as a very quick primer, transformative use is where you take something someone else definitely and clearly owns, then transform it into something wholly new in a different manner or purpose from the original work. This is why music sampling is generally allowed, provided the samples in question are small enough to be considered trivial (also known as de minimis) - the samples form a part of a different, larger work, but not a substantial amount of it. AI companies are making a similar argument: by ingesting so much data, none of it specifically makes up a significant portion of any output their models might produce.

In other words, they claim AI is equivalent to music sampling, and therefore Fair Use applies.

The Wolf in Legal Robes

While their argument seems plausible on the surface, it carries with it a very disturbing implication: that any use of any content for any profitable enterprise by any company, could be considered "Fair Use" if the content pool is large enough and the resultant output small enough. It fundamentally turns the entire notion of "Fair Use" on its head, ensuring that companies alone can freely infringe on the copyrights of others without consequence - solely because they can afford to store all that stuff and transform it into much smaller pieces.

This is not a new approach, of course. Spotify allegedly used similar tactics in its startup days, until it could secure agreements with rightsholders; Napster had tried similar tactics a few years earlier but to no avail, which arguably opened the door for Spotify to prosper by starting outside the United States first, establishing credibility and an audience to force the industry's hand in the USA. Given how well Spotify has done for itself despite (or because of) not originally being an American company, it makes a certain amount of sense that the US would be frightened of losing dominance in another field to a foreign competitor.

Still, the consequences are quite dire if AI companies prevail on their argument for Fair Use, as it ends up leaving copyright solely a problem for the consumer (i.e., the people), but not any business who profits from it. It's a calculated tactic to gut copyright laws for all corporate use, which itself is the point. After all, corporations have never hid the fact that the one thing they hate more than anything else is compensating labor. They regularly spend far more busting Unions than it would've cost to just give the workers livable wages and working conditions in the first place. They raise prices far faster than wage growth for cars, for streaming services, for basic goods, meaning workers must buy less with declining purchasing power while their corporate employers post record profits, record margins, and record buybacks, all against a backdrop of continued layoffs. At a time when corporate greed is at all time highs, they're taking the opportunity to fundamentally reshape the global economy as hostile to the worker through copyright law. They're attempting to take the de minimis portion of Fair Use, and claim it applies to any output, while exempting the input.

Consider what such a world would look like for a moment. Your social media selfie is already in an AI training set somewhere, but what if a company found it tasteful enough to use it as advertising? So long as it was targeted to a narrow group of consumers relative to the global market, they could plausibly claim that it's fair use if AI companies were to prevail, since its scope was de minimis in its output by targeting just five to ten customers out of millions overall based on their specific advertising profiles that said you would be the most attractive model to them specifically. What about your photography site, where you sell prints? An extensively interpreted "Fair Use" like AI companies are demanding would give companies carte blanche to steal it all for whatever they intend to use it for, so long as the output is minimal - like using it as promotional artwork at a single, local event with a minimal clientele compared to their global audience. The same goes for artists, musicians, software developers, writers, and more: this proposed Fair Use case would mean that so long as corporate use of your work is minimal enough in the larger marketplace, they could do as they wish with your output without compensation.

Big Problems, Big Consequences

Before I get to the meat of my point, let's take a break for a moment and think through what the immediate options are with current models and data sets. Let's say that Courts see the issue with granting Fair Use to AI training for all copyrighted works, and firmly come down on that being a violation of Copyright Law. The potential remedies for such a verdict are effectively two-fold, with both being rooted in destruction - an outcome that's not entirely ideal in either case, but is important to reconcile with before I make my proposal in the CTA.

Remedy Number One: destruction of all models built on copyrighted material.

This is the harsher of the two, as it effectively makes all LLMs and their offshoots "illegal" due to the fundamental nature of their training. As copyright law applies by default, it means everything that everyone has ever created on the internet has some form of copyright applied to it, regardless of nationality and unless explicitly stated otherwise (e.g., a license on a GitHub repo). It would mean that AI companies would have to prove that all training data used was acquired by legal means, something they cannot possibly do at this time. Since any company that cannot prove it had licensing for all training data before the model was created would have to delete the model, and they likely did a poor job including the sources of their data sets that would enable them to track down creators to negotiate licensing deals to legitimize it after-the-fact, it would entail the destruction of every major LLM on the planet by a matter of US law.

This is bad, but it gets worse, as it would mean reinforcement learning on-device could also be compromised. After all, if someone is making a verbal request while watching a movie that's copyrighted, the onus would be on the company to prove such training occurred without processing the copyrighted information - an impossible task. It would essentially kill machine learning wholesale outside of sterile environments, making it impossible to develop capable models that function in the chaos of the real world.

Remedy Number Two: destruction of all copyright protection for models and their output

This is better. Not only does it preserve the research done in this area of AI by not making it de facto illegal, it also ensures the output is for the betterment of society, not corporate balance sheets. Again, since AI companies cannot possibly demonstrate they had appropriate licenses for all training data (namely because they've ensured they cannot disclose who even owns training data, lest it be used in lawsuits against them), this would mean their entire output - the models themselves, anything created by them in part or in whole, and any use of them in any way in the future - could not be copyrighted, period. It enshrines human creativity as the sole source of legitimacy in copyright, and as an added double-whammy ensures that any use of these models in an output would render it unprotectable by copyright law.

In essence, it's a forced open-sourcing of these models in perpetuity, which seems fair given their origins in publicly-funded research and the cumulative (copyrighted) output of humanity. It would liberate these tools back into the hands of the people they're claimed to benefit, rather than walled off in the private data centers of the Technofascist Elite. Finally, it would also dissuade further development in this area of foundational models, curtailing much of the negatives associated with their creation and use (labor replacement, environmental harms, wealth concentration, data sovereignty) by focusing more on improvements in efficiency, scalability, and utility.

Are we destroying the wrong thing?

Both of the above remedies make a broad assumption that Courts and Governments reject Fair Use of copyrighted material for AI training purposes, and rightly destroy the results of that misuse in one of two ways. If we're only operating within the confines of the current system, those remedies seem our best - and only - options, given how AI companies have deliberately structured themselves to reduce their legal attack surface as much as possible.

Though apt readers might have noticed that these aren't our only options to resolve this conundrum. That while the second remedy is preferable, it also doesn't go far enough towards addressing very real, longstanding concerns about copyright itself.

So if we're willing to address the system, rather than work within its existing rules, what option is available to us?

Copyright Conundrums

I had the privilege of growing up online during the last "wild west" days of the internet. An era where information truly was free to all who would make an effort to obtain it, where surveillance had yet to scale in such a way as to identify offenders quickly or precisely. Being exposed to this world also meant familiarizing myself with the systems that governed it: peer-to-peer traffic systems, copyright laws, legal enforcement actions, potential penalties and identifiable dysfunctions.

The modern copyright system (much like modern drug laws) are a direct result of the American Empire's hegemony post-WW2, and its sheer dominance of the Western world and global economy. As such, it's written solely to benefit US business at the expense of every other entity in the world. A key example of this is the term of copyright itself: 70 years after the author's death, 95 years after publication, 120 years after creation for unpublished works, or the life of the author plus 70 years, whichever ends earlier. This change is actually fairly recent, dating back to 1976 (where it was modified from the initial 28 years of validity to 75 years or life of the author plus 50 years) and 1998 (the current laws), and its proponents cite modern technologies like radio, computers, television, film, and records as reasons for the lengthy extension of copyright terms. These extended lengths were similarly shoved into the subsequent TRIPS Agreement by the United States and its allies, mandating a minimum of 50 years copyright on all globally-created works, as well as treating computer code and software like literary works, thus granting them the same copyright protections and lengths.

The critique of copyright law is lengthy and nuanced, and for good reason: without it, both independent authors and large corporate conglomerates would be unable to produce livable income, as their work could (and as history has shown, would) be immediately stolen by those with means to improve themselves and cut the creators out of the market without compensation (like how AI is doing now). Thus, the problem is less whether or not copyright is necessary, but balancing the need for said protections with the broader interests of society as a whole; nobody is arguing that an author's work shouldn't be protected, but there's debate to be had on the utility of copyright for essential medicines as an example, or on works previously published but no longer available for sale.

This is one such discussion. Obviously LLMs have some degree of utility, however questionable, and it's likely that future AI developments will require even larger sets of data to be trained on in some fashion. However, should we grant a blanket exemption of Fair Use for private enterprise to hoover up the whole of copyrighted content for their own profit-seeking motives? I think the answer is clearly not.

Instead, I argue the time is ripe for a rework of copyright law itself, to be better suited for modern needs. AI is an excellent excuse to sit down and do this hard work, allowing ample room for compromise by both sides of the argument to create a system that better suits the needs of both parties. We could reduce the length of copyright to a better metric, such as only allowing corporate copyrights to be valid while the content is available for retail purchase and scrubbing onerous, anti-consumer DRM in the process in favor of invisible watermarking - thus opening the floodgates to consumer sales as well as adding a glut of prior works to the public domain whose publishers see no value in selling copies of. We could reduce penalties to be commensurate with actual damages, or promote proactive licensing negotiations through centralized registries instead of (potentially) millions of one-off agreements.

Regardless of which particular side you fall on, the general consensus is that modern copyright laws simply don't work for most of society. AI is exacerbating this of course, but it could also be the impetus needed to sit down, hash out differences, and build a newer, better system in its place.

What can I do now?

For starters, you can click through the links embedded in this essay and do some reading. I will always recommend Wikipedia as an excellent starting point for any new topic, especially as it tries to remain factual above all else, and unbiased or neutral when it comes to any matter of opinion. Knowledge is power, and it's critical to understand the complexities and dependencies of any system you wish to change or reform.

Second, if you're reading this on 15-MAR-2025 before midnight US Eastern time, you can submit a comment via the Federal Register about this exact issue. While it's unlikely to move the needle immediately, the louder we are, the harder we are to ignore.

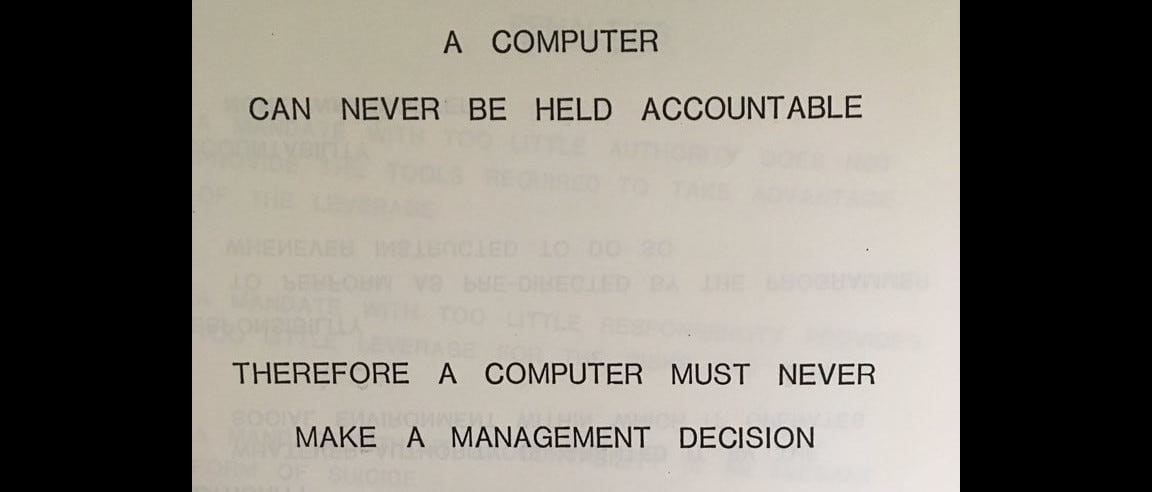

Next, you can continue to raise this grievance wherever possible. Your employer bringing AI into the business? Understand how it works and its risks, and suggest competent alternatives or mandate oversight. Your hospital or Doctor using LLMs in diagnostics? Speak up, studies in hand about their error rates or efficacy. The goal isn't to force AI out, but to ensure its use is commensurate with its value and accuracy, to not trust its output blindly or allow it to make decisions on its own since it cannot be held accountable for its errors. Point out the hypocrisy of AI companies promising to protect your data after they've already stolen so much of it, and suggest that maybe these companies can't be trusted after all.

Finally, continue to stay abreast of news in this area in general. I know, I know it's distressing to read the news in modern times, and I know it's a mental drain on anyone in a minority group or their allies. Still, these companies are betting that you'll be too overwhelmed to notice their actions - hypernormalization, in other words - and therefore unable to imagine an alternative to a world they carefully engineered.

Ultimately, your goal is to keep learning, keep informed, and above all else, keep dreaming of the world and systems you want to see. What we have today was never guaranteed yesterday, except for the lack of action to stop and change it.

We have that power. We just have to use it.